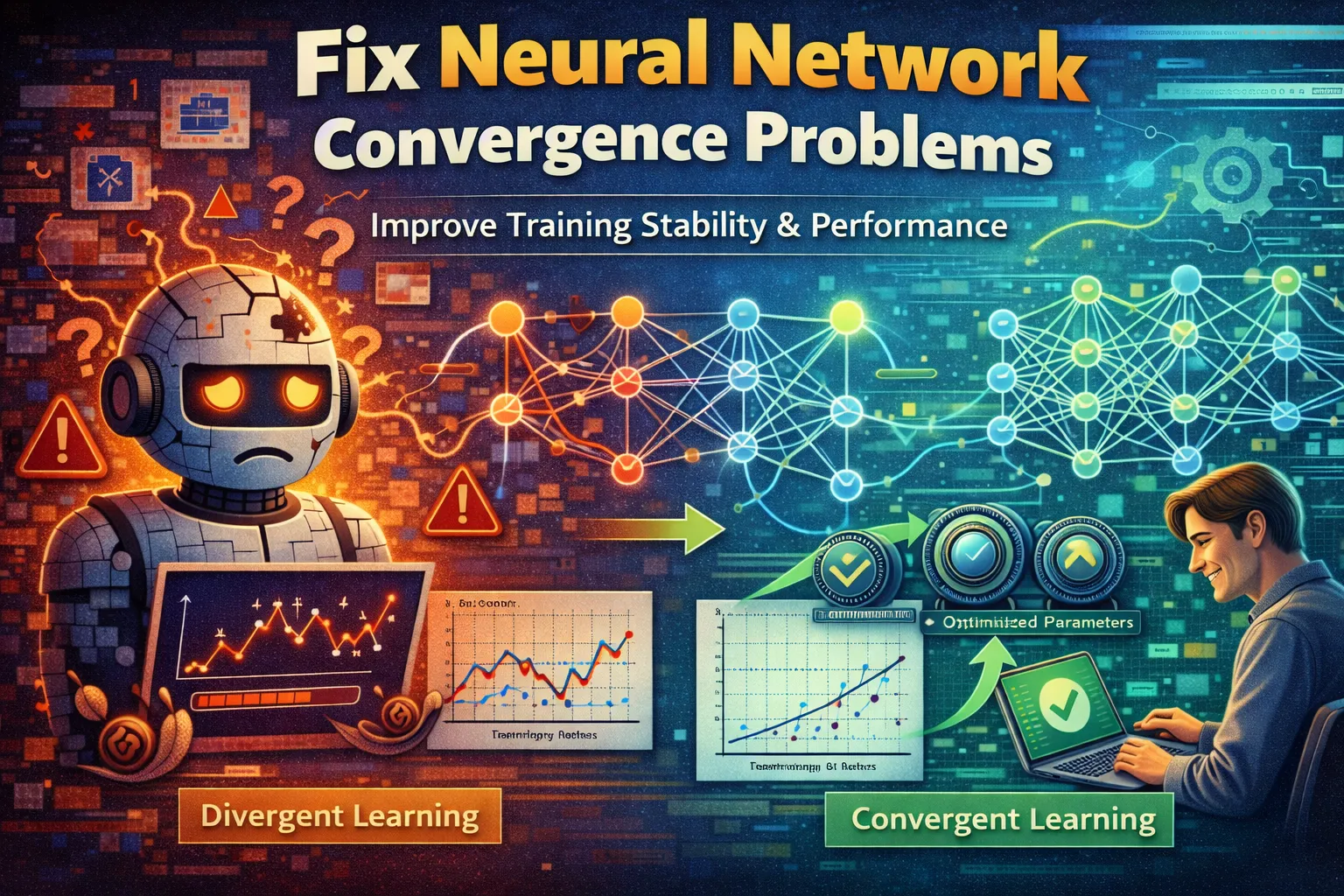

6 Critical Ways to Fix Neural Network Convergence Problems

Your neural network training has stalled. The loss curve isn’t trending down—it’s flatlining, oscillating wildly, or has exploded into NaN. This failure to converge means your model isn’t learning, wasting hours of compute time and leaving your project dead in the water. Neural network convergence is the fundamental process where a model iteratively adjusts its parameters to minimize error, but common pitfalls in data, architecture, and optimization can halt it completely. This guide cuts through the frustration with six actionable, expert-level fixes. We’ll move from quick diagnostic checks to advanced stabilization techniques to get your training back on track and your loss curve moving in the right direction.

What Causes Neural Network Convergence Problems?

Diagnosing the root cause is half the battle. Convergence issues rarely have a single source; they’re often a cascade of interacting problems. Pinpointing the likely culprit saves you from applying fixes blindly and guides you to the most effective solution.

-

Poorly Scaled or Unnormalized Input Data:

Features with vastly different ranges (e.g., age 0–100 vs. salary 0–200,000) create a loss landscape that is difficult for gradient descent to navigate efficiently. This forces the optimizer to use impractically small learning rates, crippling neural network convergence speed and stability. -

Inappropriately Set Learning Rate:

This is the most common hyperparameter failure. A rate that’s too high causes updates to overshoot the loss minimum, leading to oscillation or divergence. A rate that’s too low causes painfully slow progress, often getting stuck in a suboptimal local minimum before true convergence. -

Exploding or Vanishing Gradients:

In deep networks, repeated multiplication during backpropagation can cause gradient values to grow exponentially (explode) or shrink to zero (vanish). Both conditions are critical killers of training stability: exploding gradients cause weight updates to become NaN, while vanishing gradients stop learning in early layers entirely. -

Inadequate Model Capacity or Architecture:

A network that is too simple cannot capture the complexity of your data, causing loss to plateau at a high value. Conversely, poor architectural choices (like using Sigmoid activations in deep nets) can actively induce gradient problems that prevent stable neural network convergence.

Understanding these core issues allows you to systematically apply the following fixes, each designed to target a specific failure mode in the neural network convergence process.

Fix 1: Normalize or Standardize Your Input Data

This is your first and most critical step. Unscaled data forces your model to operate on a wildly uneven playing field, making gradient descent unstable and slow. Normalization brings all input features to a similar scale (typically 0–1 or mean 0, std 1), creating a smooth, well-conditioned loss landscape for efficient optimization and reliable neural network convergence.

-

Step 1:

Calculate the statistical properties of your training set. For standardization, compute the mean (μ) and standard deviation (σ) for each feature. For min-max normalization, find the minimum and maximum values. -

Step 2:

Apply the transformation. For standardization, use the formula:X_scaled = (X - μ) / σ. For min-max normalization:X_scaled = (X - X_min) / (X_max - X_min). -

Step 3:

Crucially, save the calculated μ, σ, min, and max values from the training set. You must use these exact same values to transform your validation and test sets—not recalculate them from those datasets. -

Step 4:

Re-initialize your model’s weights and begin training again. You should observe a more stable and rapid decrease in loss from the very first epoch.

After applying this fix, the initial loss should be lower and the descent noticeably smoother. This foundational step often resolves erratic training behavior on its own and is non-negotiable for stable neural network convergence.

Fix 2: Implement a Learning Rate Schedule

Using a fixed, static learning rate is a major limitation for neural network convergence. A schedule dynamically reduces the rate during training, allowing for large, exploratory updates early on and fine-tuned, precise updates later. This strategy directly combats oscillation around a minimum and helps push the model into deeper, more optimal convergence points.

-

Step 1:

Choose a schedule strategy. A simple exponential decay (e.g.,lr = initial_lr * decay_rate^(epoch / decay_steps)) or a step decay (halving the rate every N epochs) are excellent starting points. -

Step 2:

In your training loop (e.g., in TensorFlow/Keras or PyTorch), implement the logic to update the learning rate at the end of each epoch or after a set number of steps. Most frameworks have built-in schedulers liketf.keras.optimizers.schedules.ExponentialDecayortorch.optim.lr_scheduler.StepLR. -

Step 3:

Set aggressive but reasonable starting parameters. For example, start with an initial learning rate 5–10 times higher than your previous static rate, and set the decay to reduce it by half every 20–30 epochs. -

Step 4:

Monitor the training loss. You should see rapid initial progress followed by a period of steadier, more refined improvement as the rate decreases—the hallmark of healthy neural network convergence.

A proper schedule will show a clean, downward-trending loss curve without the “bouncing” characteristic of a rate that’s too high in the late stages of training. This is a key technique for achieving deep neural network convergence.

Fix 3: Apply Gradient Clipping

When you see loss suddenly spike to NaN, you are almost certainly dealing with exploding gradients—one of the most disruptive threats to stable training. Gradient clipping is a direct surgical fix. It imposes an upper limit on the magnitude of gradients during backpropagation, preventing the massive weight updates that destabilize training and destroy your model’s parameters.

-

Step 1:

Identify the need. If your training loss becomes NaN (Not a Number) or increases exponentially before crashing, exploding gradients are the likely cause. -

Step 2:

In your optimizer configuration, set the gradient clipping norm. In TensorFlow/Keras, addclipnormorclipvaluearguments to your optimizer (e.g.,tf.keras.optimizers.Adam(clipnorm=1.0)). In PyTorch, usetorch.nn.utils.clip_grad_norm_(model.parameters(), max_norm=1.0)after computing gradients but before the optimizer step. -

Step 3:

Start with a conservative clipping value. A common and effective starting point is to clip the global norm of all gradients to 1.0 or 5.0. This is often enough to control explosions while still allowing meaningful updates. -

Step 4:

Resume training. The NaN values should disappear, and the loss will begin to behave predictably again, allowing neural network convergence to resume its path toward an accurate model.

This fix acts as a safety net, ensuring training stability in deep or recurrent networks prone to gradient instability. It’s essential for neural network convergence in complex architectures like LSTMs or very deep CNNs.

Fix 4: Switch to a More Robust Activation Function

Using Sigmoid or Tanh activations in deep networks is a primary cause of the vanishing gradient problem, which halts neural network convergence in early layers. Switching to Rectified Linear Unit (ReLU) or its variants (Leaky ReLU, ELU) ensures gradients can flow backward more effectively, directly enabling stable neural network convergence in modern architectures.

-

Step 1:

Identify all activation functions in your model’s hidden layers. Replace any Sigmoid (tf.nn.sigmoid,torch.sigmoid) or Tanh functions. -

Step 2:

Substitute them with ReLU (tf.nn.relu,torch.nn.ReLU()) as a robust default. For layers where you suspect “dying ReLU” (neurons outputting only zero), use Leaky ReLU with a small slope like 0.01. -

Step 3:

In your output layer, keep the activation appropriate for your task (e.g., Softmax for classification, linear for regression). Do not change this. -

Step 4:

Re-initialize your model weights and restart training. Monitor the gradient flow; you should see active learning across all layers.

This change often results in faster initial loss reduction and prevents the training loss from plateauing prematurely. It’s a foundational fix for deep learning stability and neural network convergence.

Fix 5: Add Batch Normalization Layers

Internal Covariate Shift—where the distribution of layer inputs changes during training—forces constant adaptation and slows neural network convergence. Batch Normalization layers stabilize this shifting mean and variance, allowing for higher learning rates, reduced sensitivity to initialization, and acting as a mild regularizer to improve generalization and training stability.

-

Step 1:

Insert a Batch Normalization layer immediately after the linear/convolutional layer and before the activation function in your network architecture. This is the standard positioning for optimal effect. -

Step 2:

In frameworks like Keras, usetf.keras.layers.BatchNormalization(). In PyTorch, usetorch.nn.BatchNorm1d()orBatchNorm2d()matching the layer dimension. -

Step 3:

Retain a higher learning rate than you would without BatchNorm. You can often increase your rate by a factor of 5 or 10, as the normalization reduces the risk of divergence during sensitive training phases. -

Step 4:

Train the model. You should observe a much smoother and more consistent drop in loss per epoch, with reduced oscillation and faster overall neural network convergence.

BatchNorm acts as a powerful stabilizer, making the optimization landscape significantly smoother. It’s especially critical for neural network convergence in very deep networks.

Fix 6: Systematically Tune Hyperparameters with a Search

Neural network convergence failure is often due to a poor combination of hyperparameters like learning rate, batch size, and optimizer settings. Manual tuning is inefficient. Implementing a systematic search (like Random or Bayesian Search) explores the hyperparameter space intelligently to find a combination that reliably drives loss down, ensuring your model achieves neural network convergence.

-

Step 1:

Define a search space. Key parameters to tune are learning rate (log scale, e.g., 1e-5 to 1e-1), batch size (powers of 2), and optimizer type (Adam, SGD with momentum). -

Step 2:

Choose a search method. Use a library likescikit-learn‘sRandomizedSearchCVfor a random search orOptuna/Ray Tunefor more advanced Bayesian optimization. -

Step 3:

Set an objective metric, typically the validation loss after a fixed number of epochs, to evaluate each trial. This directly measures progress toward neural network convergence. -

Step 4:

Run the search. Analyze the top-performing trials to identify the optimal hyperparameter set, then train your final model with those values to achieve reliable neural network convergence.

A successful search will yield a configuration that produces a clean, monotonic decrease in validation loss. This is the definitive method to eliminate hyperparameter guesswork and secure robust neural network convergence.

When Should You See a Professional?

If you have meticulously applied all six fixes—from data normalization to systematic hyperparameter tuning—and your model still fails to achieve neural network convergence, the issue likely transcends code configuration and points to a deeper, systemic problem.

This persistent neural network convergence failure may indicate fundamental data mismatch (where your training data does not contain the patterns needed for the task), severe overfitting on microscopic datasets, or a critical bug in your custom layer implementation or loss function that corrupts gradient computation. For complex projects, consulting an expert or reviewing your approach on a platform like

Stack Overflow

can provide the architectural insight needed. In a professional setting, this is the point where you would escalate to a senior machine learning engineer or a research scientist to audit the entire project pipeline.

Do not waste weeks on a broken approach; seeking a professional review can diagnose obscure neural network convergence issues in hours and get your project back on track.

Frequently Asked Questions About Neural Network Convergence

How long should I train my neural network before deciding it won’t converge?

There’s no universal epoch count, but you need a diagnostic framework for assessing neural network convergence. First, ensure you’re monitoring validation loss, not just training loss. If the validation loss hasn’t decreased meaningfully (e.g., by more than 1%) after 50–100 epochs on a reasonably sized dataset with the fixes applied (proper normalization, learning rate schedule), neural network convergence is likely stalled. Second, use early stopping with a patience of 10–20 epochs; if no improvement occurs within that window, the training has effectively plateaued. The key is to look for a sustained downward trend. If the loss curve is perfectly flat or chaotically noisy from the start, halt training immediately and re-apply diagnostic fixes like gradient clipping or checking your data pipeline.

Can a neural network converge to the wrong solution?

Yes, this is a common outcome known as converging to a poor local minimum or a saddle point. Your model’s loss stops decreasing, but it settles on a solution with high error because the optimizer got trapped in a suboptimal region of the loss landscape. This type of failed neural network convergence is especially prevalent with inadequate model capacity, poor initialization, or an excessively low learning rate. Techniques like using Adam (which handles saddle points better than vanilla SGD), adding Batch Normalization, and employing learning rate schedules are specifically designed to help the model escape these traps and achieve genuine neural network convergence. Furthermore, a model can converge to a solution that performs well on training data but fails on unseen data (overfitting), which is a different type of “wrong” solution addressed by regularization.

What is the difference between convergence and overfitting?

Neural network convergence refers to the optimization process successfully finding a set of model parameters that minimize the training loss. Overfitting occurs when the model learns the noise and specific details of the training set to such a degree that it harms performance on new, unseen data. You can have perfect convergence (training loss near zero) but severe overfitting (high validation loss). The hallmark of overfitting is a growing gap between training and validation loss curves as epochs increase. To ensure a healthy outcome, you must monitor both metrics and employ techniques like dropout, weight decay (L2 regularization), and early stopping to prevent the model from memorizing rather than generalizing.

Why does my loss go down and then back up during training?

This “valley-and-peak” pattern is a clear sign of unstable neural network convergence, most commonly caused by a learning rate that is too high. The optimizer takes steps that are too large, overshooting the loss minimum and causing the loss to bounce back up. It can also be caused by a batch size that is too small, leading to noisy gradient estimates that pull the model in conflicting directions each update. To fix this instability and restore neural network convergence, first implement a learning rate schedule to reduce the rate over time. Second, consider gradient clipping to prevent any single update from being catastrophically large. Finally, increasing your batch size (if memory allows) can provide a smoother, more consistent gradient estimate and steadier neural network convergence.

Conclusion

Ultimately, achieving stable neural network convergence is a systematic engineering challenge, not a mystery. By methodically applying these six fixes—normalizing your data, implementing a learning rate schedule, clipping exploding gradients, switching to robust activations like ReLU, adding Batch Normalization, and conducting a hyperparameter search—you address the root causes of neural network convergence failure from data preprocessing to optimization. Each technique targets a specific instability, transforming a chaotic, non-converging training run into a smooth, predictable descent toward an accurate model.

Start with Fix 1 and work your way down the list, as each solution builds upon the last. Remember, persistence paired with the right diagnostic approach will solve even the most stubborn neural network convergence problem. Share your success in the comments below—which fix finally got your model to converge?

Visit

TrueFixGuides.com

for more.

Written & Tested by: Antoine Lamine

Lead Systems Administrator