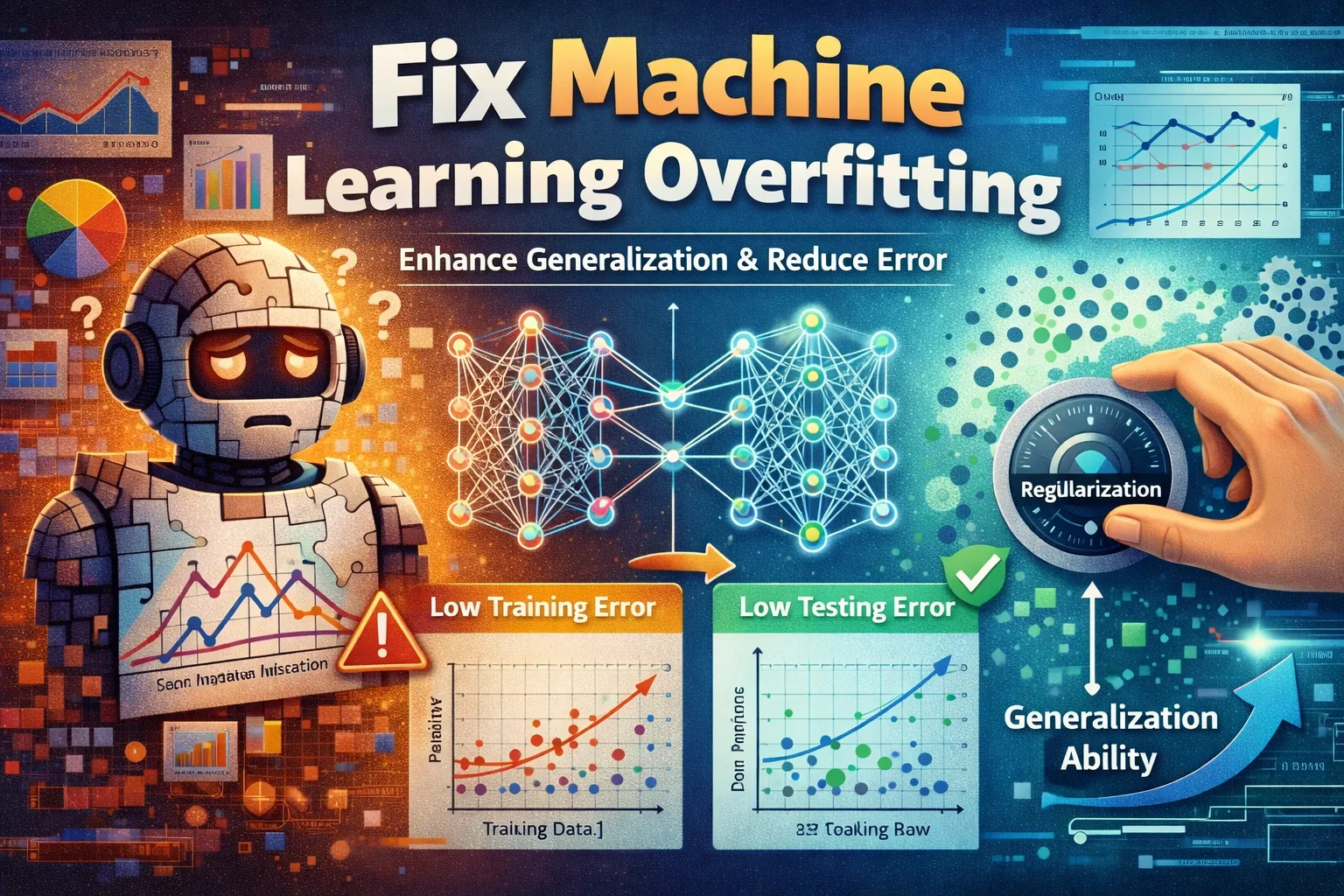

6 Critical Ways to Fix Machine Learning Overfitting

Your model aces the training data with 99% accuracy but flops miserably on new, unseen examples. This frustrating scenario, where a model learns the “noise” in the training set instead of the underlying pattern, is called machine learning overfitting. It’s the single most common roadblock to deploying a robust, generalizable AI. When machine learning overfitting goes unaddressed, your model becomes useless for real-world prediction. This guide cuts through the theory to deliver six actionable, proven fixes used by data scientists to force models to learn the signal, not the noise. We’ll start with foundational techniques and build to advanced strategies to ensure your model performs reliably.

What Causes Machine Learning Overfitting?

Effectively fixing machine learning overfitting requires understanding its root causes. It’s not random; it’s a direct result of specific imbalances in your model and data.

-

Excessively Complex Model:

Using a model with too many parameters (like a deep neural network with millions of weights) for a simple dataset gives it enough “memory” to memorize every training sample, including irrelevant noise, instead of generalizing. -

Insufficient or Poor-Quality Training Data:

A model trained on a small, noisy, or non-representative dataset has no choice but to fit the limited examples perfectly, learning spurious correlations that won’t hold up on new data. -

Training for Too Many Epochs:

In iterative training, continuing to train a model long after it has learned the true pattern forces it to start fitting the random fluctuations in the training data, leading to worsening validation performance . -

Lack of Effective Regularization:

Without techniques that explicitly penalize model complexity during training, there is nothing to stop the model’s weights from becoming overly tuned to the training set’s idiosyncrasies.

These causes directly inform the fixes below, which are designed to add constraints, improve data, and monitor training to prevent machine learning overfitting from taking hold.

Fix 1: Implement Cross-Validation

This is your primary diagnostic tool against machine learning overfitting. Cross-validation doesn’t directly prevent it, but it provides an honest, robust estimate of how your model will perform on unseen data, exposing machine learning overfitting early. It works by repeatedly splitting your data into different training and validation sets.

-

Step 1:

Choose a cross-validation strategy. For most problems, start with k-fold (e.g., 5-fold or 10-fold) cross-validation. -

Step 2:

Split your entire dataset into ‘k’ equal-sized groups (folds). -

Step 3:

Train your model ‘k’ times. Each time, use a different fold as the validation set and the remaining k-1 folds as the training set. -

Step 4:

Record the performance metric (e.g., accuracy) on the validation fold for each of the ‘k’ runs, then calculate the average and standard deviation.

If machine learning overfitting is present, you’ll see high variance in scores across folds or a large gap between training-fold scores and validation-fold scores. This average score is a far more reliable performance indicator than a single train/test split, making it an essential first diagnostic step.

Fix 2: Apply L1 or L2 Regularization

Regularization directly attacks the cause of machine learning overfitting by penalizing model complexity during training. It adds a “cost” to the loss function for having large weights, forcing the model to find a simpler, more general solution. L2 (Ridge) shrinks weights, while L1 (Lasso) can zero them out entirely.

-

Step 1:

Identify where to add regularization. For linear/logistic regression, it’s a hyperparameter. In neural networks, add it as akernel_regularizerin your layers. -

Step 2:

Select a regularization type. Start with L2 regularization, as it’s more stable. Set a small lambda (λ) value (e.g., 0.01) . -

Step 3:

Modify your model’s loss function. The new loss becomes: Original Loss + λ * Σ(weights² for L2) or λ * Σ|weights| for L1. -

Step 4:

Retrain your model. Monitor the validation loss. The training loss may be slightly higher, but the validation loss should improve and the gap between the two should narrow—the key signal that machine learning overfitting is being corrected.

A properly regularized model will have smaller weight values and will be less sensitive to minor fluctuations in the input data, which is the hallmark of better generalization and reduced machine learning overfitting.

Fix 3: Use Dropout for Neural Networks

Dropout is a powerful, simulation-based regularization technique specifically designed to combat machine learning overfitting in neural networks. During training, it randomly “drops out” (sets to zero) a fraction of a layer’s neurons on each update, preventing any single neuron from becoming overly specialized and forcing the network to learn robust, distributed features.

-

Step 1:

Identify layers for dropout. Apply dropout to dense or convolutional layers, typically after the activation function. Avoid the output layer. -

Step 2:

Add a Dropout layer in your model architecture. In frameworks like TensorFlow/Keras, you add it asDropout(rate=0.5)where the rate is the fraction of neurons to randomly disable. -

Step 3:

Choose a dropout rate. A common starting point is 0.5 (50%) for hidden layers and 0.2–0.3 for input layers. This is a key hyperparameter to tune. -

Step 4:

Train your model. During inference (prediction), dropout is turned off and all neurons are used, but their outputs are scaled by the dropout rate to maintain the expected magnitude.

You should observe that the network trains slower but achieves a lower and more stable validation error, as it can no longer rely on specific co-adapted neurons memorizing the data—the exact mechanism behind machine learning overfitting in deep nets.

Fix 4: Apply Early Stopping During Training

This technique directly combats machine learning overfitting caused by training for too many epochs. Early stopping monitors the model’s performance on a validation set during training and halts the process as soon as performance begins to degrade, preventing the model from memorizing noise. It’s an efficient, automatic way to find the optimal stopping point.

-

Step 1:

Split your data into training and validation sets. Reserve a portion (e.g., 20%) of your training data to serve as the validation monitor for machine learning overfitting. -

Step 2:

Configure the early stopping callback. In frameworks like Keras, useEarlyStopping(monitor='val_loss', patience=5). Set ‘patience’ to the number of epochs with no improvement before stopping. -

Step 3:

Train your model with the callback active. The training loop will log the validation metric after each epoch. -

Step 4:

Let the callback stop training. The model’s weights will be automatically restored to those from the epoch with the best validation score, giving you the optimally generalized model.

Success is a training log that shows validation loss decreasing and then plateauing or increasing, at which point training stops. This is a crucial, low-effort step to fix machine learning overfitting caused by excessive iteration.

Fix 5: Simplify Your Model Architecture

When your model has too much capacity (too many parameters) for your data, the model will memorize instead of generalize. This fix reduces that capacity by removing layers or neurons, forcing the model to learn only the most important patterns. It’s the most direct way to address complexity-driven machine learning overfitting.

-

Step 1:

Evaluate your current architecture. Note the number of layers, neurons per layer, and total trainable parameters. -

Step 2:

Create a simpler model. For a neural network, reduce the number of hidden layers by one or cut the number of neurons in each layer by 30–50%. -

Step 3:

Retrain the simplified model using the same data and training procedure as your original complex model. -

Step 4:

Compare validation performance. The simpler model may have slightly higher training error but should show a significantly smaller gap between training and validation accuracy—a reliable sign that machine learning overfitting has been reduced.

You’ve succeeded when the simpler model achieves comparable or better validation performance than the complex one. This is a foundational strategy for combating machine learning overfitting across all model types.

Fix 6: Augment Your Training Data

Machine learning overfitting often stems from a model seeing too few examples. Data augmentation artificially expands your training dataset by creating modified, realistic versions of your existing data. This teaches the model to recognize the core pattern despite variations, dramatically improving its ability to generalize to new, unseen samples and reducing machine learning overfitting caused by data scarcity.

-

Step 1:

Identify appropriate transformations. For images, use rotations, flips, zooms, or brightness changes. For text, use synonym replacement or back-translation. -

Step 2:

Apply transformations on-the-fly during training. Use built-in generators (e.g.,ImageDataGeneratorin Keras) to apply random augmentations to each batch, ensuring the model never sees the exact same sample twice. -

Step 3:

Start with mild augmentations. A good starting point is random horizontal flips and small (±10%) rotations. Avoid distortions so severe the true label is no longer accurate. -

Step 4:

Retrain your model with the augmented data pipeline. Monitor the validation loss curve; it should become smoother and converge to a lower value than before, indicating that machine learning overfitting is being actively reduced.

A successfully augmented dataset will cause training to progress more slowly, but the final model will be far more robust and far less prone to machine learning overfitting, as it has learned invariant features rather than memorized specific examples.

When Should You See a Professional?

If you have methodically applied all six fixes—from cross-validation and regularization to architecture simplification and data augmentation—and your model still shows a severe performance gap between training and validation sets, the issue may transcend standard algorithmic tuning. This persistent machine learning overfitting can indicate a fundamental problem with your data’s underlying structure or a requirement for a specialized model type beyond general-purpose solutions.

Specific signs include consistently high variance across all cross-validation folds despite regularization, which may point to irreconcilable noise or non-stationarity in your data generation process. In such cases, the problem requires expert diagnosis of data pipelines. For issues related to deep learning frameworks themselves, consulting official documentation, like

TensorFlow’s guide on overfitting,

is a critical first step. If the model is for a high-stakes application (e.g., medical diagnosis or autonomous systems) and machine learning overfitting is still causing generalization failure, professional oversight is non-negotiable.

Your next step should be to consult with a senior data scientist, machine learning engineer, or the support team for your specific ML platform to audit your entire pipeline.

Frequently Asked Questions About Machine Learning Overfitting

What is the simplest way to check if my model is overfitting?

The simplest and most direct diagnostic for machine learning overfitting is to plot your model’s learning curves. During training, log the loss (or accuracy) for both your training set and a held-out validation set after every epoch. Graph these two metrics over time. A clear sign of machine learning overfitting is when the training loss continues to decrease while the validation loss stops improving and begins to increase. This growing gap between the two lines visually confirms the model is memorizing training data noise at the expense of generalization. For a quick check before full training, use k-fold cross-validation; high variance in scores across folds is a strong early indicator of machine learning overfitting.

Can I use multiple overfitting fixes at the same time?

Absolutely, and in practice, you often should when tackling machine learning overfitting. Combining techniques like dropout, L2 regularization, and early stopping is a standard and highly effective strategy. Each method constrains the model in a different way—dropout forces robust feature learning, L2 penalizes large weights, and early stopping prevents excessive training—so they work synergistically. The key is to introduce them one at a time and monitor their impact. Start with the most relevant fix for your model type (e.g., dropout for neural nets), then incrementally add others while tuning their hyperparameters (like dropout rate or lambda value) to avoid over-regularizing, which can lead to underfitting.

How much training data do I need to prevent overfitting?

There’s no universal threshold, as the amount needed depends heavily on your model’s complexity and the problem’s difficulty. A useful rule of thumb is that you need exponentially more data as model complexity increases. A simple linear model might generalize well with hundreds of samples, while a deep convolutional network may require tens or hundreds of thousands. If collecting more real data isn’t feasible, the fixes outlined here become essential. Prioritize data augmentation to artificially expand your dataset and use strong regularization techniques to combat machine learning overfitting on limited data.

Is machine learning overfitting always worse than underfitting?

In the context of deploying a reliable system, machine learning overfitting is generally the more insidious and common problem, but both are detrimental. An underfit model is too simple—it fails to capture the underlying trend in even the training data. It’s usually obvious and easier to fix by increasing model capacity. A model suffering from machine learning overfitting, however, appears deceptively successful during development, showing excellent training metrics while failing silently on new data. This can lead to costly real-world failures. Therefore, the primary goal in model tuning is to navigate the bias-variance trade-off and find the sweet spot between the two, though practitioners often initially err on the side of slight machine learning overfitting before applying targeted fixes.

Conclusion

Ultimately, to fix machine learning overfitting, you must build constraints into your development pipeline. We’ve walked through six critical strategies: using cross-validation for honest diagnosis, applying L1/L2 regularization and dropout to penalize complexity, implementing early stopping to halt noisy training, simplifying your model architecture to match your data, and augmenting data to teach robust features. Together, these techniques form a comprehensive defense against machine learning overfitting. By systematically applying them, you shift your model’s goal from perfect recall on training examples to intelligent generalization on unseen data—which is the true measure of a successful AI.

Start with Fix 1 (Cross-Validation) to diagnose your model’s current state, then work through the techniques most relevant to your project. Remember, a slightly imperfect model that works in the real world is far more valuable than a perfect one that doesn’t. We’d love to hear which fix resolved your machine learning overfitting—share your experience in the comments below or pass this guide to a colleague facing the same challenge.

Visit

TrueFixGuides.com

for more.

Written & Tested by: Antoine Lamine

Lead Systems Administrator