6 Critical Ways to Fix AI Training Data Imbalance

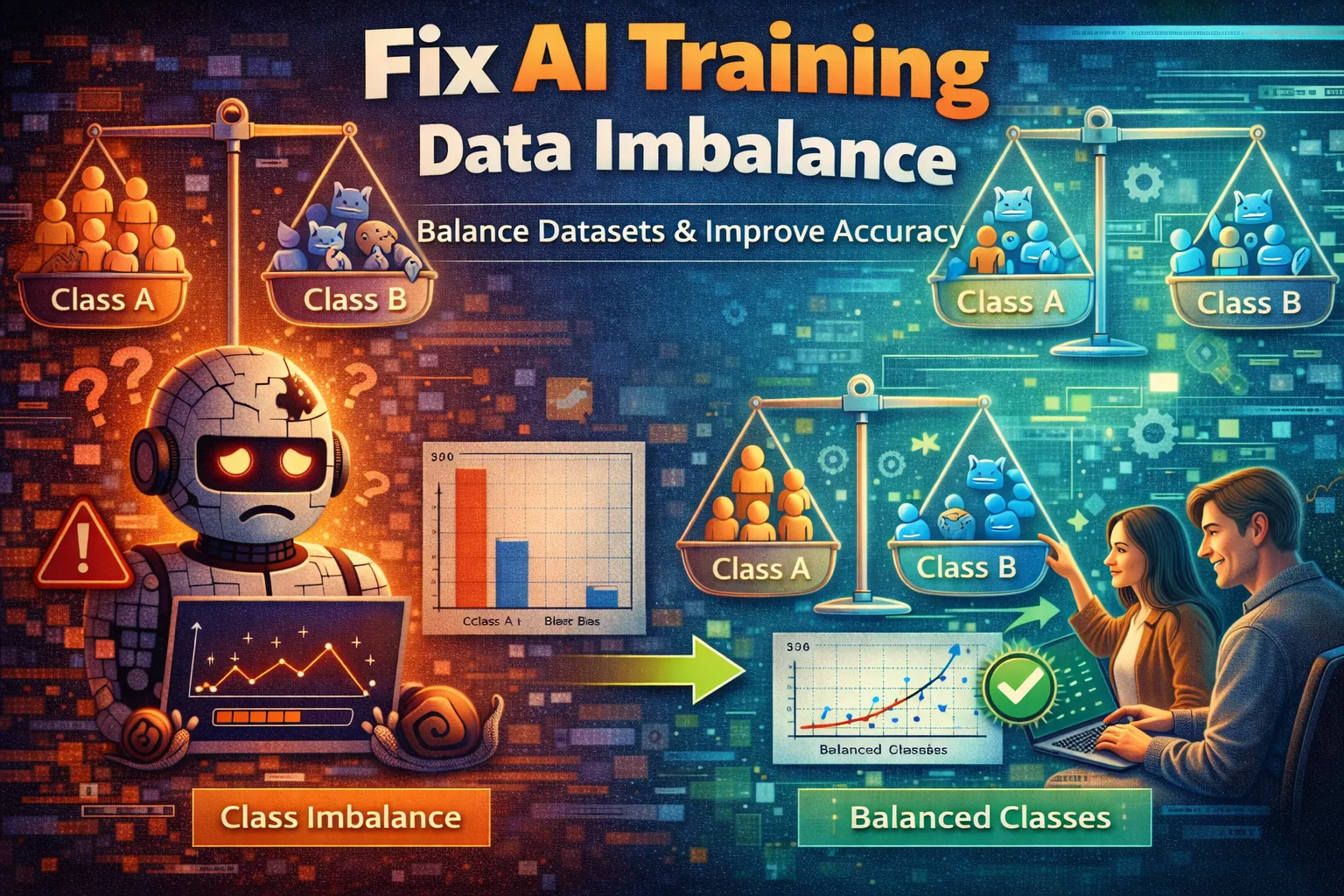

Your AI model reports 95% accuracy, yet it completely fails to detect fraud, diagnose rare diseases, or recognize critical defects. This frustrating paradox is a classic symptom of AI training data imbalance, where one class dominates the others, crippling your model’s ability to learn. An imbalanced training set forces the algorithm to take the “easy” path, optimizing for the majority class while ignoring the critical minority examples you actually care about. This bias isn’t just an academic problem—it leads to real-world failures in security, healthcare, and quality control. To fix AI training data imbalance, you must actively intervene in the dataset before model training begins. The following six proven methods, from simple resampling to advanced algorithmic approaches, will restore balance, improve minority class recall, and build a truly robust machine learning model.

What Causes AI Training Data Imbalance?

Understanding the root cause of skewed data is the first step toward an effective solution. Imbalance isn’t a bug in your code; it’s a fundamental property of the real-world phenomena you’re trying to model.

-

Inherent Rarity:

The event you’re predicting is statistically rare. Fraudulent transactions, equipment failures, and certain medical conditions are naturally less frequent than their normal counterparts, creating AI training data imbalance through inherent data scarcity. -

Biased Data Collection:

Your sampling method systematically overlooks the minority class. For instance, gathering customer feedback only from a website may miss complaints from users who gave up and left, skewing sentiment analysis and skewing your sentiment analysis. -

Cost or Difficulty of Labeling:

Annotating data for the minority class is often more expensive or requires expert knowledge. In medical imaging, finding and labeling rare tumor cases takes significantly more time and resources than labeling healthy scans, worsening the dataset skew. -

Temporal Shifts:

The distribution of classes changes over time. A model trained on historical marketing data may become imbalanced if customer behavior shifts, making past minority classes the new majority.

These causes lead directly to a model that is accurate yet useless for its intended purpose. The fixes below directly counteract these specific sources of AI training data imbalance.

Fix 1: Apply Random Oversampling

This is the most straightforward technique to fix AI training data imbalance. By randomly duplicating existing examples from the minority class, you artificially increase its presence in the training set, giving the model more opportunities to learn its patterns. It’s fast, easy to implement with libraries like imbalanced-learn, and requires no external data.

-

Step 1:

Isolate your minority class. Using Python and pandas, filter your training DataFrame to create a subset containing only the underrepresented class labels . -

Step 2:

Calculate the replication factor. Determine how many samples you need to add to match the count of the majority class. For example, if the majority has 1000 samples and the minority has 100, you need to add approximately 900 copies. -

Step 3:

Randomly sample with replacement. Use thesample()function in pandas with thereplace=Trueparameter on your minority subset. Draw the number of samples calculated in Step 2. -

Step 4:

Concatenate and shuffle. Combine the newly sampled minority data with your original training set. Crucially, useshuffle()from sklearn to randomize the order, preventing the model from learning the artificial sequence of repeated samples.

After this fix, your class distribution bar chart will show equal heights. The model will now see the minority class in every batch during training. Beware: this method can lead to overfitting, as the model may simply memorize the duplicated examples.

Fix 2: Implement Random Undersampling

When your dataset is very large and the majority class has abundant, possibly redundant examples, undersampling provides a quick counterbalance. You fix AI training data imbalance by strategically discarding data from the over-represented class, reducing computational cost and training time while forcing the model to focus on the distinguishing features between classes.

-

Step 1:

Isolate your majority class. Separate the over-represented class—from your training dataset into its own DataFrame or array. -

Step 2:

Determine the target size. The goal is typically to reduce the majority class count to match the minority class count. If your minority class has 200 samples, your target for the majority is also 200. -

Step 3:

Randomly select a subset. From your isolated majority class data, randomly select a number of examples equal to your target size. Usenp.random.choiceor pandassample()withreplace=Falseto select unique rows. -

Step 4:

Recombine the balanced dataset. Merge the undersampled majority subset with the full, untouched minority class data. This creates a new, smaller, but perfectly balanced training set with resolved AI training data imbalance.

You will now have a symmetrical dataset. Training will be faster, but the major risk is the irreversible loss of potentially valuable information from discarded majority samples, which could harm the model’s ability to generalize.

Fix 3: Generate Synthetic Data with SMOTE

To overcome the overfitting pitfall of simple oversampling, the Synthetic Minority Oversampling Technique (SMOTE) creates new, plausible examples. It fixes AI training data imbalance by intelligently interpolating between existing minority class instances, expanding the feature space in a realistic way and helping the model learn more robust decision boundaries.

-

Step 1:

Install the necessary library. In your Python environment, runpip install imbalanced-learn. This library provides a robust, optimized implementation of SMOTE specifically designed to tackle AI training data imbalance. -

Step 2:

Prepare your feature and target arrays. Separate your training data intoX_train(features) andy_train(target labels). SMOTE resamplesX_trainbased on the class distribution iny_train. -

Step 3:

Instantiate and fit the SMOTE transformer. Import SMOTE:from imblearn.over_sampling import SMOTE. Create an instance:smote = SMOTE(random_state=42). Then fit and transform:X_resampled, y_resampled = smote.fit_resample(X_train, y_train). -

Step 4:

Verify the results. Print the value counts ofy_resampledusingpd.Series(y_resampled).value_counts(). Equal counts confirm that SMOTE has corrected the AI training data imbalance. Proceed to train your model on the resampled arrays.

Your model will now train on a dataset where the minority class is represented by a diverse set of original and synthetically generated points, leading to significantly better generalization. SMOTE is widely considered the gold standard for correcting AI training data imbalance in tabular datasets.

Fix 4: Use Class Weighting in Your Model

This method directly addresses the core loss function of your algorithm to fix AI training data imbalance. Instead of altering the dataset, you instruct the model to penalize misclassifications of the minority class more heavily, forcing it to pay greater attention during training without any data manipulation—making it one of the lowest-overhead approaches to handling AI training data imbalance.

-

Step 1:

Identify your model’sclass_weightparameter. Most scikit-learn classifiers (e.g.,LogisticRegression,RandomForestClassifier,SVC) include this argument. Check your model’s documentation for supported values. -

Step 2:

Calculate or set the weights. The most effective setting is oftenclass_weight='balanced', which automatically sets weights inversely proportional to class frequencies. For manual control, pass a dictionary like{0: 1, 1: 10}. -

Step 3:

Instantiate your model with the weights. When creating your model object, include the class_weight parameter. For example:model = RandomForestClassifier(class_weight='balanced', random_state=42). -

Step 4:

Train and evaluate as normal. Fit the model on your original, imbalanced training data. The weighting handles the AI training data imbalance during fitting, and you should see a marked improvement in recall for the minority class on your test set.

Success is a significant boost in minority class recall without changing your data pipeline. This is a powerful, low-overhead strategy for addressing AI training data imbalance, especially when you cannot afford to lose data through undersampling.

Fix 5: Employ Ensemble Methods Like Balanced Random Forest

This advanced technique combines the power of ensemble learning with built-in sampling to correct AI training data imbalance. Algorithms like Balanced Random Forest inherently fix AI training data imbalance by constructing each tree in the forest from a bootstrapped sample that is balanced, ensuring every learner is exposed to the minority class.

-

Step 1:

Import the specialized ensemble. From theimbalanced-learnlibrary, importBalancedRandomForestClassifier. Runpip install imbalanced-learnif you haven’t already done so. -

Step 2:

Configure the classifier. Instantiate the model withsampling_strategy='auto'(to balance all classes) andreplacement=True(for bootstrap sampling). You can setn_estimatorsas you would for a standard Random Forest. -

Step 3:

Train on your imbalanced data. Fit the model directly on your originalX_trainandy_train. Internally, it creates balanced bootstrap samples for each tree, correcting AI training data imbalance without any pre-processing step. -

Step 4:

Evaluate performance using metrics like precision-recall AUC or F1-score rather than accuracy. Compare results against a standard Random Forest to quantify how much the correction improved minority class detection.

You’ll have a robust model that maintains the full dataset’s variance while systematically combating AI training data imbalance. This is ideal for complex, high-dimensional data where other balancing techniques might distort the feature space.

Fix 6: Curate or Acquire More Minority Class Data

When synthetic or algorithmic fixes fall short, the most fundamentally robust solution to AI training data imbalance is to address the data scarcity at its source. Actively seeking more real-world examples of the underrepresented class is the definitive way to fix AI training data imbalance, as it enriches the training set with genuine, diverse patterns.

-

Step 1:

Audit your data collection pipeline. Identify where minority class instances are being missed . Tools like

Google’s Data Cards

can help trace origins and gaps. -

Step 2:

Initiate targeted data acquisition. This could involve partnering with domain experts to label rare cases, deploying sensors in new environments, or using focused web scraping to find underrepresented examples. -

Step 3:

Implement active learning. Use your current model’s uncertainty to guide labeling. Flag instances where the model is least confident; these are prime candidates for expert labeling to efficiently grow your minority set . -

Step 4:

Validate and integrate new data. Rigorously check new data for quality and bias before merging it with your existing training set. Retrain your model on this augmented dataset that addresses the AI training data imbalance at its root.

This results in a model grounded in a richer, more authentic representation of reality. While resource-intensive, correcting AI training data imbalance through real data acquisition often yields the most generalizable and trustworthy models for critical applications.

When Should You See a Professional?

If you have methodically applied all six fixes—from resampling and SMOTE to ensemble methods and data acquisition—and your model’s performance on the minority class remains dangerously poor, the issue may transcend a simple AI training data imbalance fix. This persistent failure often indicates deeper problems such as fundamentally non-informative features, severe label noise, or a problem formulation that doesn’t match the business objective.

Other critical signs requiring expert intervention include suspected data poisoning or complex multi-modal distributions within a single class that standard balancing techniques cannot address. For mission-critical systems in finance or healthcare, consulting with a machine learning engineer who specializes in model robustness and fairness is essential. Frameworks like

Google’s Responsible AI Practices

provide a professional baseline for such audits.

Don’t risk a production failure; engaging a professional for a thorough diagnostic review can save your project and ensure ethical, reliable deployment.

Frequently Asked Questions About AI Training Data Imbalance

Can I just collect more data to fix AI training data imbalance?

Simply collecting more data is not a guaranteed solution to AI training data imbalance and can sometimes make the problem worse. If your data collection method is inherently biased and continues to oversample the majority class, you’ll end up with a larger but equally skewed dataset. The key is targeted data acquisition focused specifically on underrepresented classes. Before scaling collection, audit your sources for systemic bias. Often, a strategic combination of collecting specific minority examples and applying techniques like SMOTE is more efficient than a blanket effort to gather “more data” to address AI training data imbalance.

What’s the difference between oversampling and SMOTE for AI training data imbalance?

Random oversampling duplicates existing minority class instances to address AI training data imbalance, which can lead to overfitting because the model may simply memorize the repeated examples. SMOTE (Synthetic Minority Oversampling Technique) generates new, synthetic examples by interpolating between existing minority instances in feature space. This creates a more diverse set of training examples, helping the model learn a smoother and more robust decision boundary and significantly reducing the risk of overfitting when correcting AI training data imbalance.

How do I choose the right technique to fix AI training data imbalance?

The choice depends on your dataset size, computational resources, and the specific risk you want to mitigate. For a quick baseline, start with class weighting—it’s simple and doesn’t alter your data. If you have a very large dataset and want faster training, try random undersampling. For most tabular data problems, SMOTE is an excellent default. For complex, high-stakes models, ensemble methods like Balanced Random Forest are powerful. Always use a validation set with metrics like Precision-Recall AUC to empirically test which method works best.

Does fixing AI training data imbalance always improve model performance?

Fixing AI training data imbalance almost always improves performance on minority class metrics like recall and F1-score, which are critical for applications like fraud detection or medical diagnosis. However, it can sometimes cause a slight decrease in overall accuracy or precision, as the model now prioritizes the previously ignored class. This is not a failure but a recalibration toward a more useful model. Always evaluate using a full suite of metrics after applying any balancing technique to understand the true impact.

Conclusion

Ultimately, to effectively fix AI training data imbalance, you must move beyond accuracy and actively manage your dataset’s composition. We’ve explored a spectrum of solutions, from the immediate practicality of random oversampling and class weighting to the sophisticated synthesis of SMOTE and the robust architecture of Balanced Random Forests. The most definitive, though demanding, path is targeted data acquisition. Each method offers a different trade-off between simplicity, computational cost, and risk of overfitting, allowing you to choose the right tool based on your project’s constraints and the criticality of the minority class.

Begin by diagnosing the severity of your skew, then test these fixes systematically on a validation set. Remember, a model that perfectly ignores the problem you built it to solve is worse than useless. We encourage you to start with Fix 1 or Fix 4 and share your results. Which technique gave you the breakthrough in minority class recall? Comment below or share this guide with your team to tackle skewed data together.

Visit

TrueFixGuides.com

for more.